AI - Why Architecture matters more than ever.

How do we automate Architecture efforts with AI?

Is this really the right question to ask?

It sounds logical, but it rarely leads to the highest return on investment. The better question to ask is:

What are architectural preconditions for AI agents to function reliably, economically, and at scale?

There is a growing assumption that the need to clean up enterprise IT landscapes will diminish as AI systems become more capable. Agent-based AI promises to work with unstructured data, undocumented interfaces, and heterogeneous legacy environments.

If intelligent agents can navigate chaos, why invest in order?

This assumption does not hold under closer technical, architectural, and economic considerations. Not everything needs to be modernized. But the right things must be. Enterprise Architecture becomes the vehicle that decides where investment is mandatory and where technical debt can remain tolerated.

The illusion of autonomous agents

Agents understand natural language, orchestrate workflows across systems, and solve problems autonomously. In controlled demonstrations, an agent retrieves information from multiple systems and produces coherent outcomes with minimal configuration.

The implicit conclusion is seductive: if agents are intelligent enough, the underlying architecture becomes secondary.

What these narratives hide is the difference between a demo environment and a production enterprise landscape. Under the surface of every AI initiative sit legacy systems, fragmented data models, undocumented integration logic, and accumulated operational shortcuts. The question is not whether an AI agent can technically operate in such an environment, but under which conditions it does so predictably, cost-efficiently, and in a way that is in line with governance and compliance.

Answering that question requires an understanding of the concrete limitations of today's AI capabilities.

Context window constraints - why structure still matters

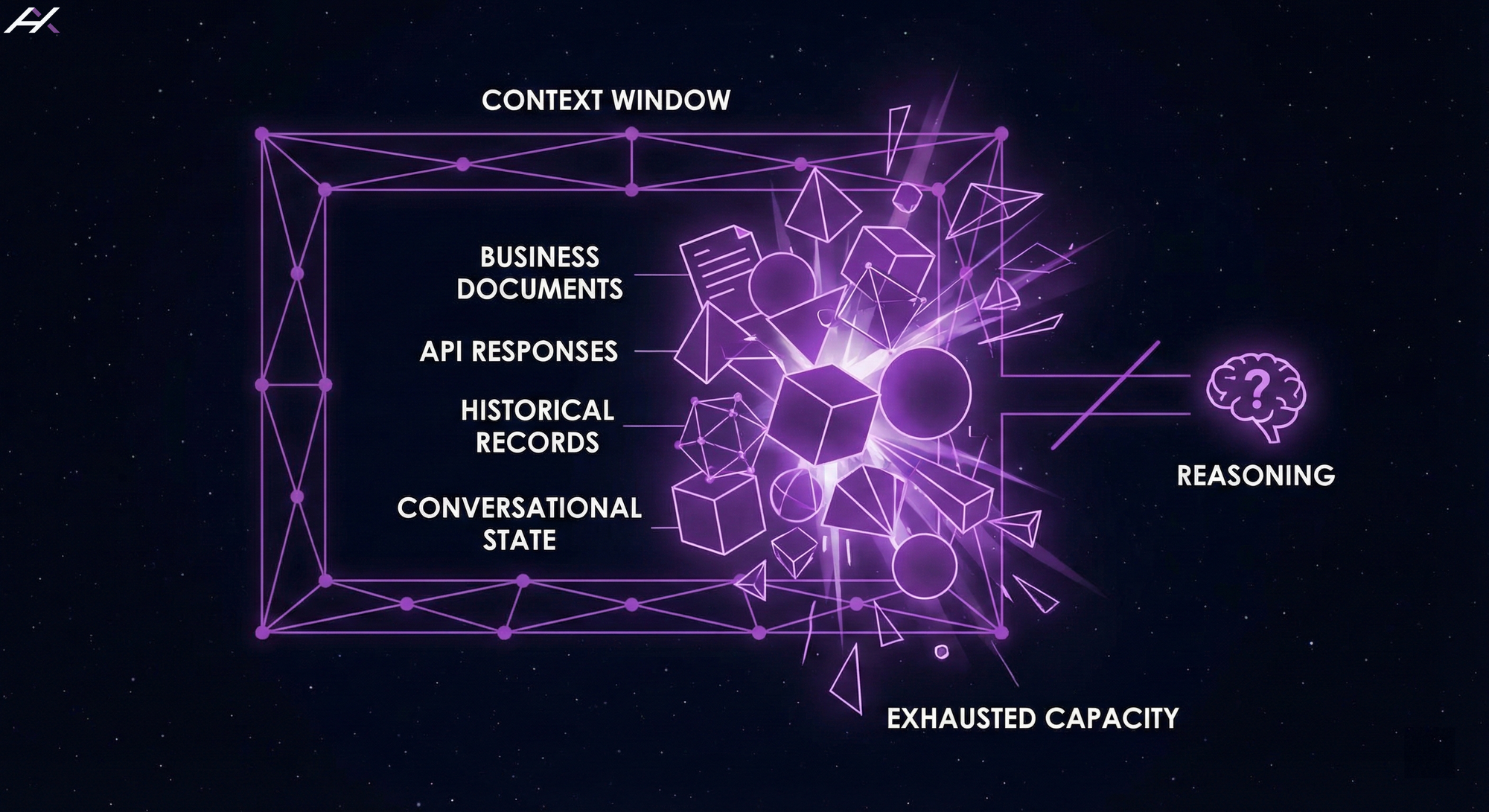

Large Language Models operate within a bounded context window. Depending on the model, this ranges from several thousand to a few hundred thousand tokens. While this appears generous, it is quickly exhausted in enterprise scenarios.

Business documents, API responses, historical records, and conversational state all compete for limited context capacity. When an agent aggregates information from multiple poorly structured systems, the context fills up before any meaningful reasoning begins.

RAG (Retrieval Augmented Generation) can mitigate this problem, but only if the underlying data is semantically indexed, and accessible in a predictable way. Larger context windows do not eliminate the issue; they amplify the cost and increase the probability of degraded reasoning as irrelevant or redundant information accumulates.